Category: Charts & Graphs

-

Billion Dollar Babies

I sat on this post until after National Adoption Month because it seemed like the polite thing to do and because I didn’t have time to finish it until now. It’s about adoption. Not whether adoption is good or bad. My feelings about adoption are whatever my client’s feelings are — and it should be…

-

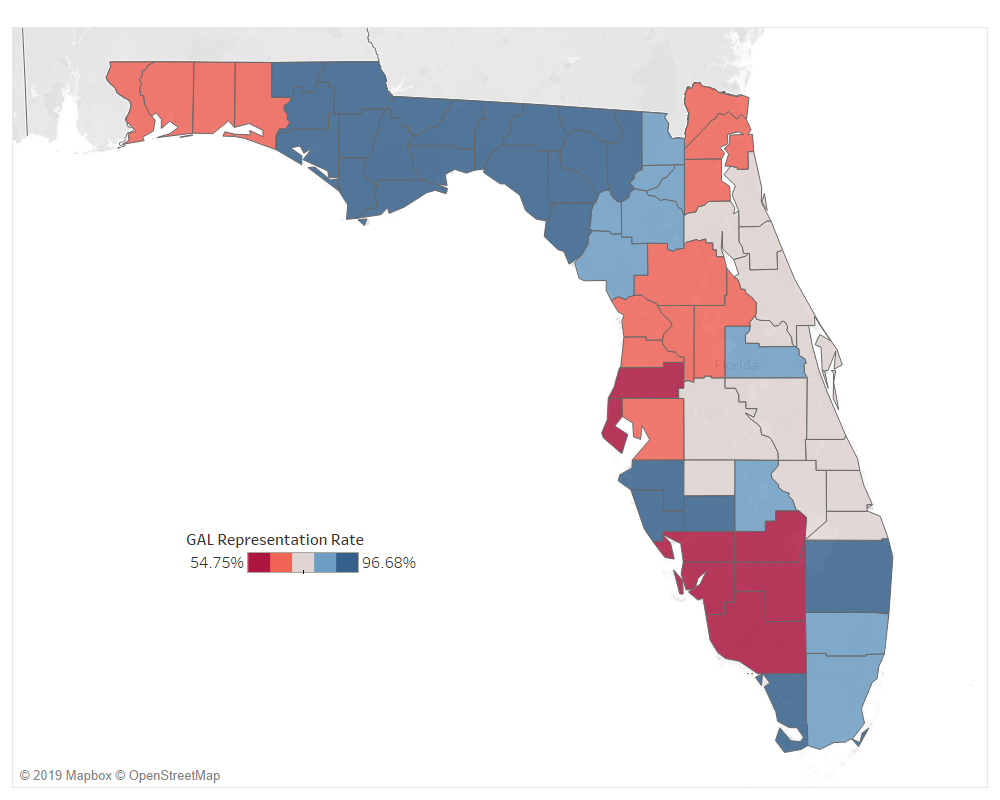

A Reluctant Post About the Guardian ad Litem Program: Its Ethics, Efficacy, & Future

The Guardian ad Litem Program started as a scrappy community of advocates and gadflies who sought to bring attention and change to the dependency system. It is now a state agency that’s been appropriated over $600 million in the last 15 years. There has never been a comprehensive study to determine whether the Program accomplishes…

-

Plotting Permanency: Using the public FSFN database to explore exit patterns in Florida foster care

This post introduces a new public FSFN dashboard on permanency timing in Florida’s child welfare system. If you want to just play with the dashboard, you can find it here. All but one of the graphics in this post come from the dashboard. Every year, in legislatures across the country, well-meaning people propose bills to…

-

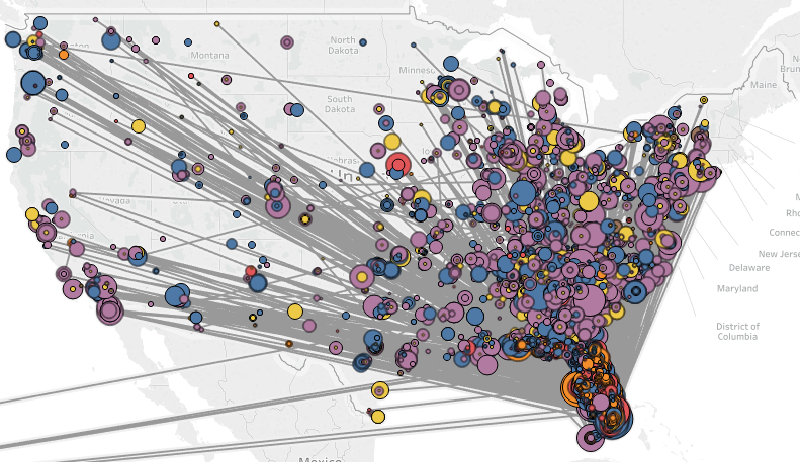

I made a “Yelp for Child Welfare” Because We Need to Know More (Not Less) About Foster Care Providers

This post introduces a new public FSFN dashboard: the Placement Provider Info Dashboard. If you want to jump straight there, feel free. You should click the fullscreen button in the bottom right corner. Below is the why and how of it. There is a well-meaning bill working through the legislature that would exempt the names…

-

This is not okay – Visualizing foster care placement instability

Christopher O’Donnell and Nathaniel Lash at the Tampa Bay Times recently published an outstanding investigative piece on the harmful number of placement changes some kids experience while in foster care. They write: Foster care is intended to be a temporary safety net for children at risk of neglect and abuse at home. Those children, many…

-

Only 67 kids have been removed on Christmas Day in Florida (since 2007)

A video of a child being forcibly removed from his mother has been in the news lately. It’s brutal to watch. A group of police officers and security guards yank at the one-year-old while another swings a taser wildly around the room at anyone who gets too close. The woman is on the floor. Her…

-

How long do Florida appeals take?

The First DCA published statistics on its caseloads and decisions. But notably (as appellate judges like to say), the length of time they take to resolve cases was not reported. It motivated me to update the How Long do Appeals Take tableau. The answer? Probably 120 to 170 days for a Dependency case, 260 to…

-

It’s sort of official: Florida foster care is in a contraction period

In July of this year, the Florida foster care system did something unseen since February 2014: it shrunk. For the first time in over 50 months, the year-over-year (YOY) change in out-of-home care numbers went down by 45 children. By August it was down 118, and the reports out this month for October show a contraction of…

-

Florida’s foster care system is racially biased, too

The ACLU of Florida did a fantastic (and super data-heavy) study of racial and ethnic disparities in the Miami criminal justice system called Unequal Treatment. It’s amazing and you should check it out. The study reminded me that DCF publishes its own statistics on race, but they are buried in the Trend Report excel graveyard.…

-

Is my appeal taking a long time? Probably not.

Our office has been handling more appeals lately, and I am learning the rhythm of the process a little better each day. Appeals seem to go like this: (1) you lose or win at trial and feel really emotional about it, (2) you file your appeal or get noticed that someone filed one on you,…