Category: Charts & Graphs

-

CBC Funding Doesn’t Make Children Live in Offices

CBCs like to blame funding for their difficulties. But it’s not that simple.

-

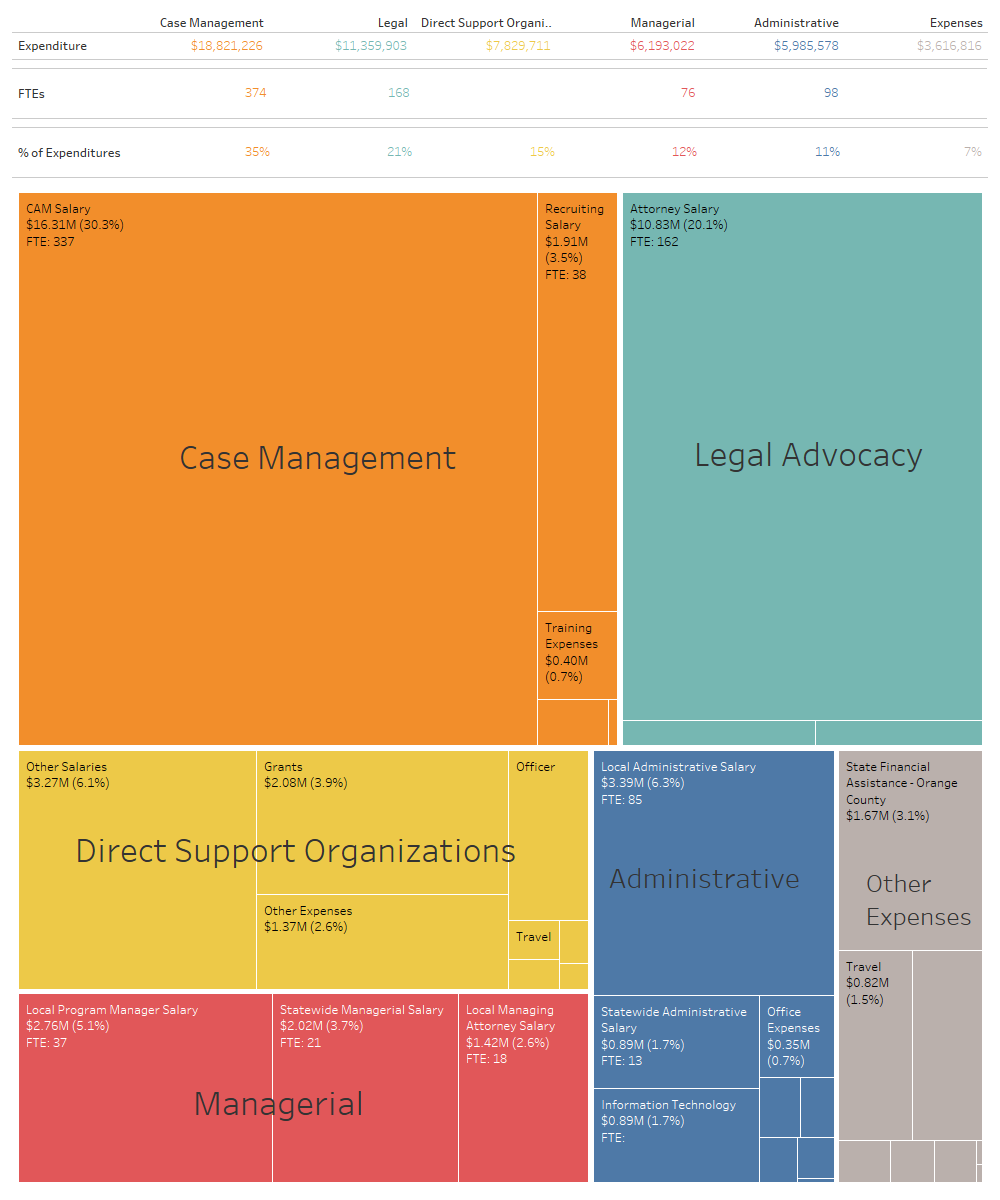

The GAL Program’s Budget in 31 Charts

The GAL Program gets more money from the legislature each year, but never seems to get closer to 100% representation. Here’s where the money goes.

-

I never want to write about COVID again

I wrote last month that if there wasn’t a big jump in the number of kids being removed by October (the typical high-point in Fall removals) then I was going to call the COVID prophecies bunk. And here we are with the October data and…nothing. The October removal numbers came in lower than expected. [November…

-

What COVID did and didn’t do to Florida’s Foster Care System

I haven’t written about the COVID numbers for a few months because there hasn’t been much new to say. We’ve needed more time to tell if the initial shocks in April were a blip or a new normal. We now have 6 months of data to compare and the answer looks pretty clear to me.…

-

COVID Month 4: Still no removal wave, but discharges are struggling

Florida DCF’s numbers are out for June and, in terms of investigations and removals, they were spectacularly normal. The predicted wave of removals isn’t here yet, if it’s ever coming at all. To the contrary, the system is struggling to discharge kids, as reunification and adoptions have both significantly decreased. We also have our first…

-

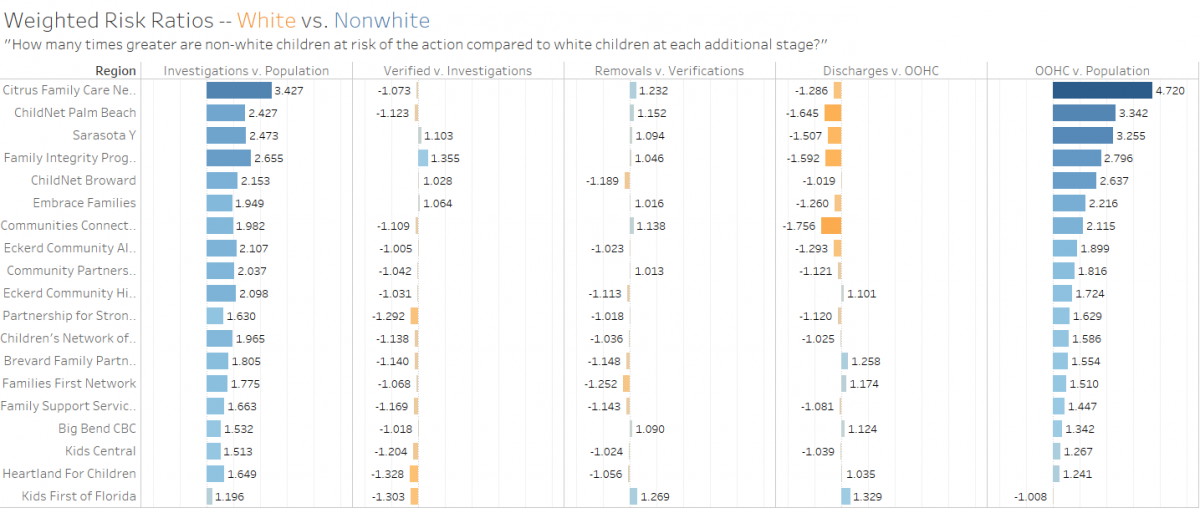

Comparing Racial Disparity in Florida’s Child Welfare System

A critical step in reducing racial disparity in the child welfare system is having a way of measuring it. The standard methods, including the one used by DCF in its public dashboards, all have documented weaknesses that either under- or over-estimate disproportionality and provide numbers that cannot be directly compared between different subgroups like regions…

-

Month 3: Only a crisis if you thought 2010 was awful

The May numbers are out and they begin to show how the pandemic is affecting things later in the pipeline from investigation to removal. Remember that investigations take about 60 days from intake. Intakes were down 6% in March and 16% in April from their expected numbers based on historical trends. Closures on investigations in…

-

What happened in March?

Florida DCF abuse intakes were down 17% in March. We have no idea what we will see in April.

-

54 pages about 49 kids: The children who refused placement in Hillsborough County

Back in August, an ad hoc committee of the juvenile justice board in Hillsborough issued a lengthy report on the state of placement instability in their circuit. The report diagnosed the phenomenon of children refusing placement as a major source of that instability. The committee concluded that “children under the care and custody of Florida’s…